One day, I was doing some support for Plain, the company I work at.

I see an issue pop up about our daily Slack digest feature not working, despite it being toggled on.

Quite weird, it’s the first time I see this. My knee jerk reaction is to go and start digging into logs, trying to work out what happened.

However, this time I thought I’d experiment. Why not try and steer Claude Code to do the work for me?

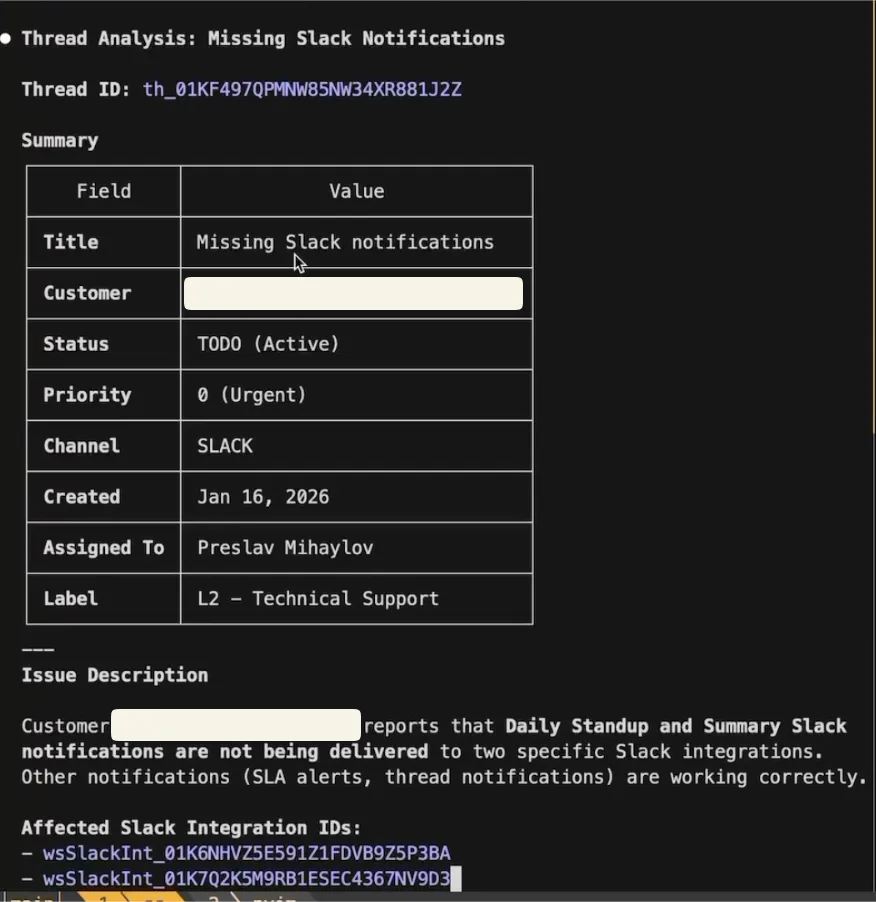

So the first thing I did was to connect it to our Plain support workspace and let it gather the data it needs about the ticket.

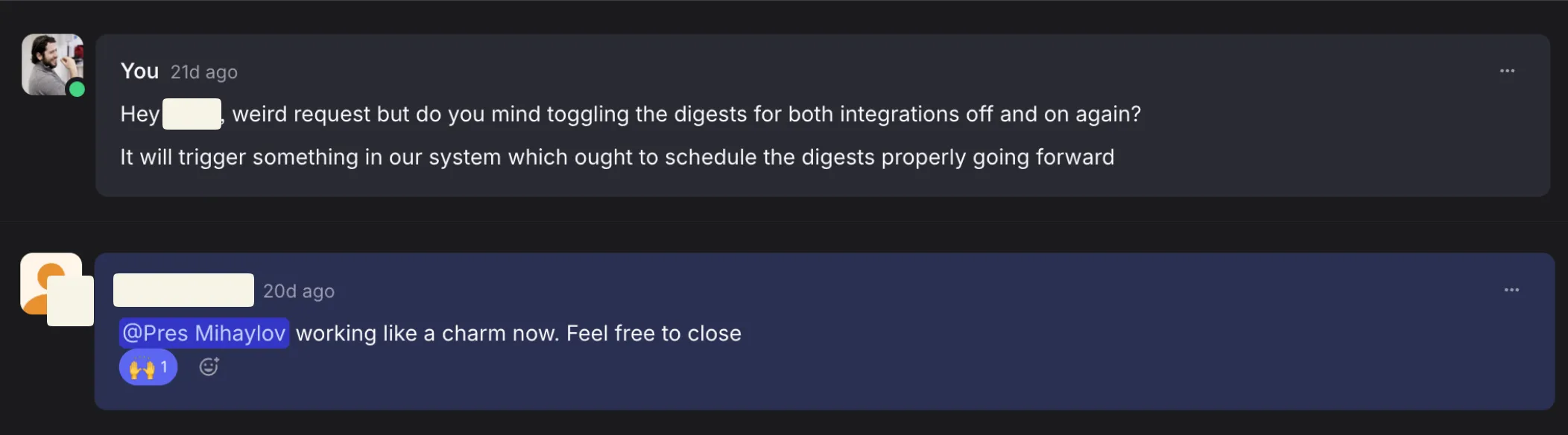

A few tool calls later, I see this:

Quite cool! Claude managed to work out the issue without me having to go back and forth copy-pasting context.

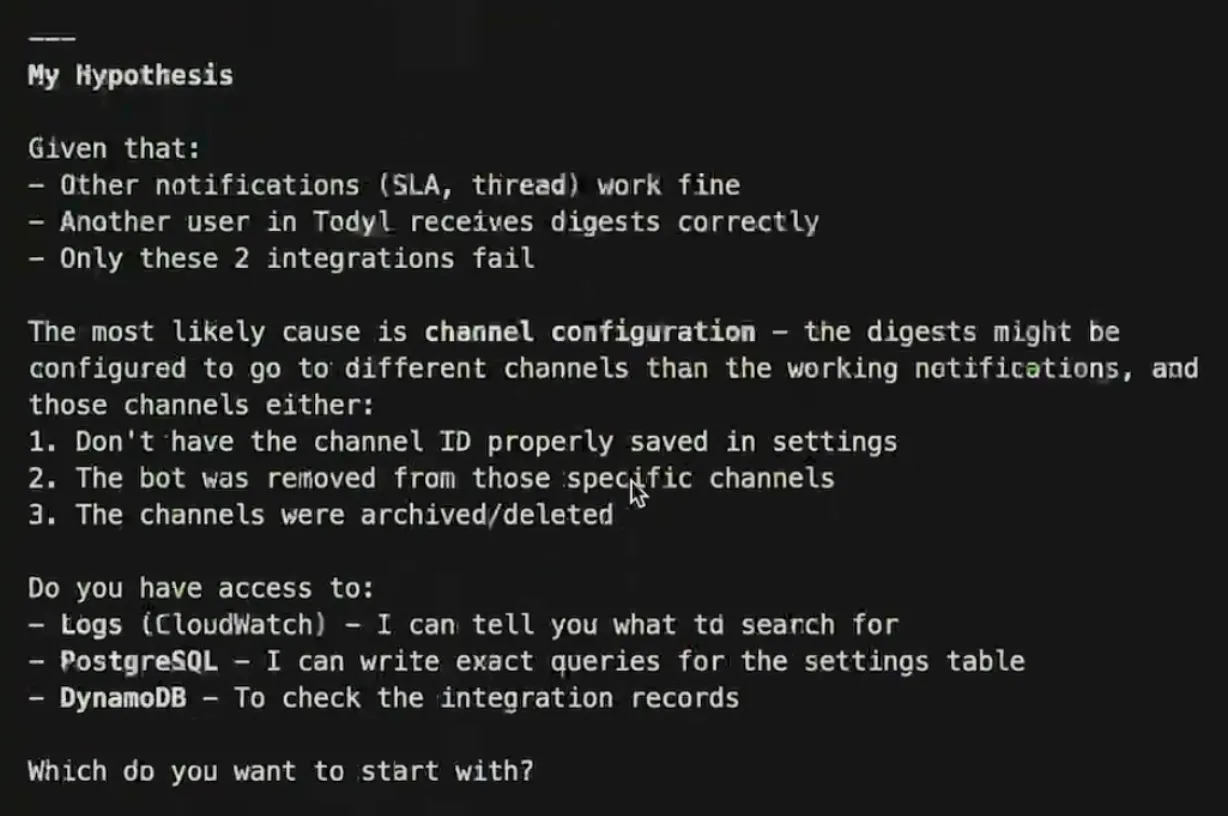

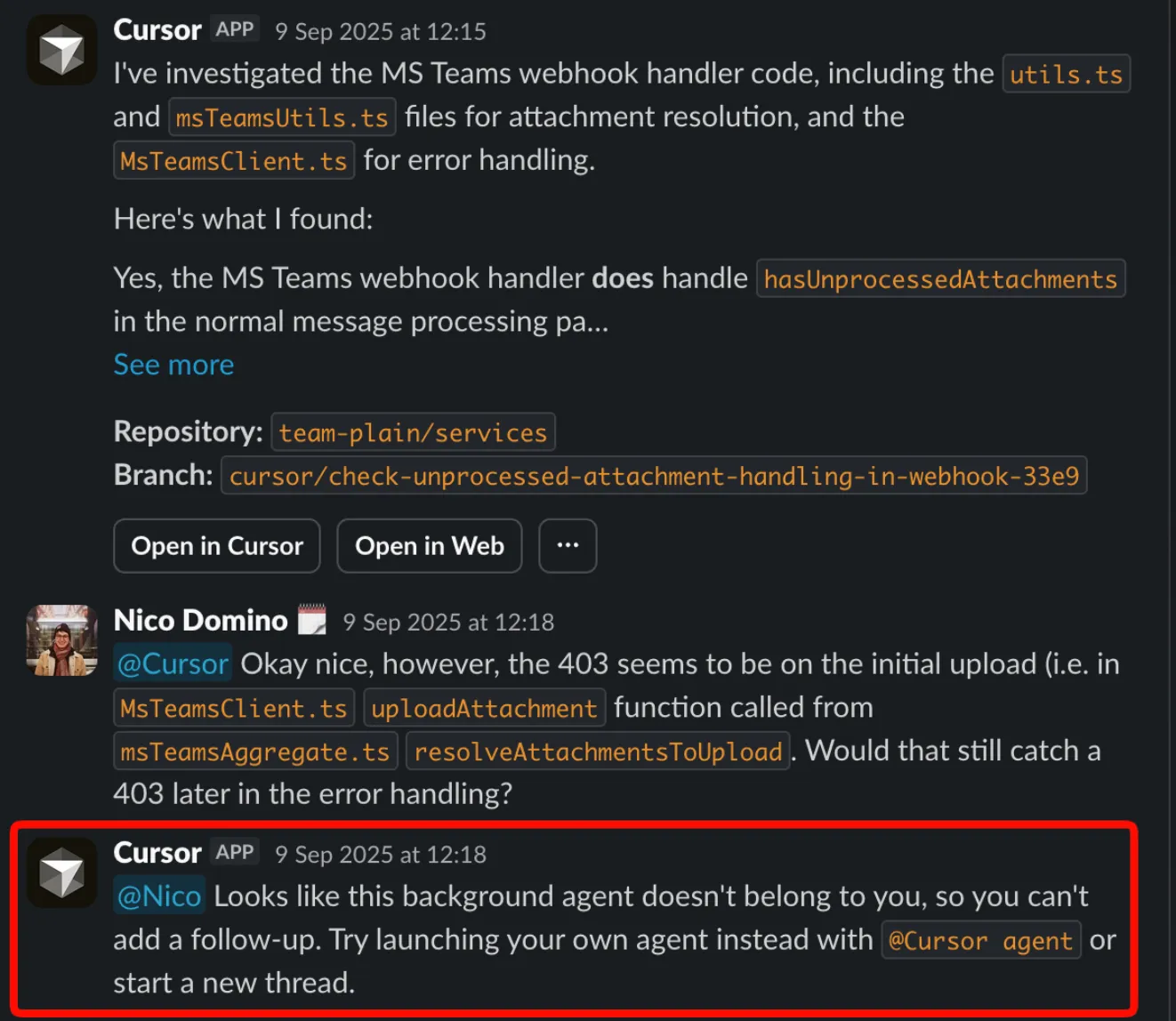

It also did a preliminary investigation in the codebase, but that didn’t really give a definitive answer, just a couple of hypotheses:

This was the point when I thought I’d have to go and dig into Cloudwatch. But wait… Why not give Claude access to that too? Surely it could work out what’s going on on its own.

So I fetched some temporary AWS credentials for read-only access to logs, stored them in an env file and asked Claude to do its thing.

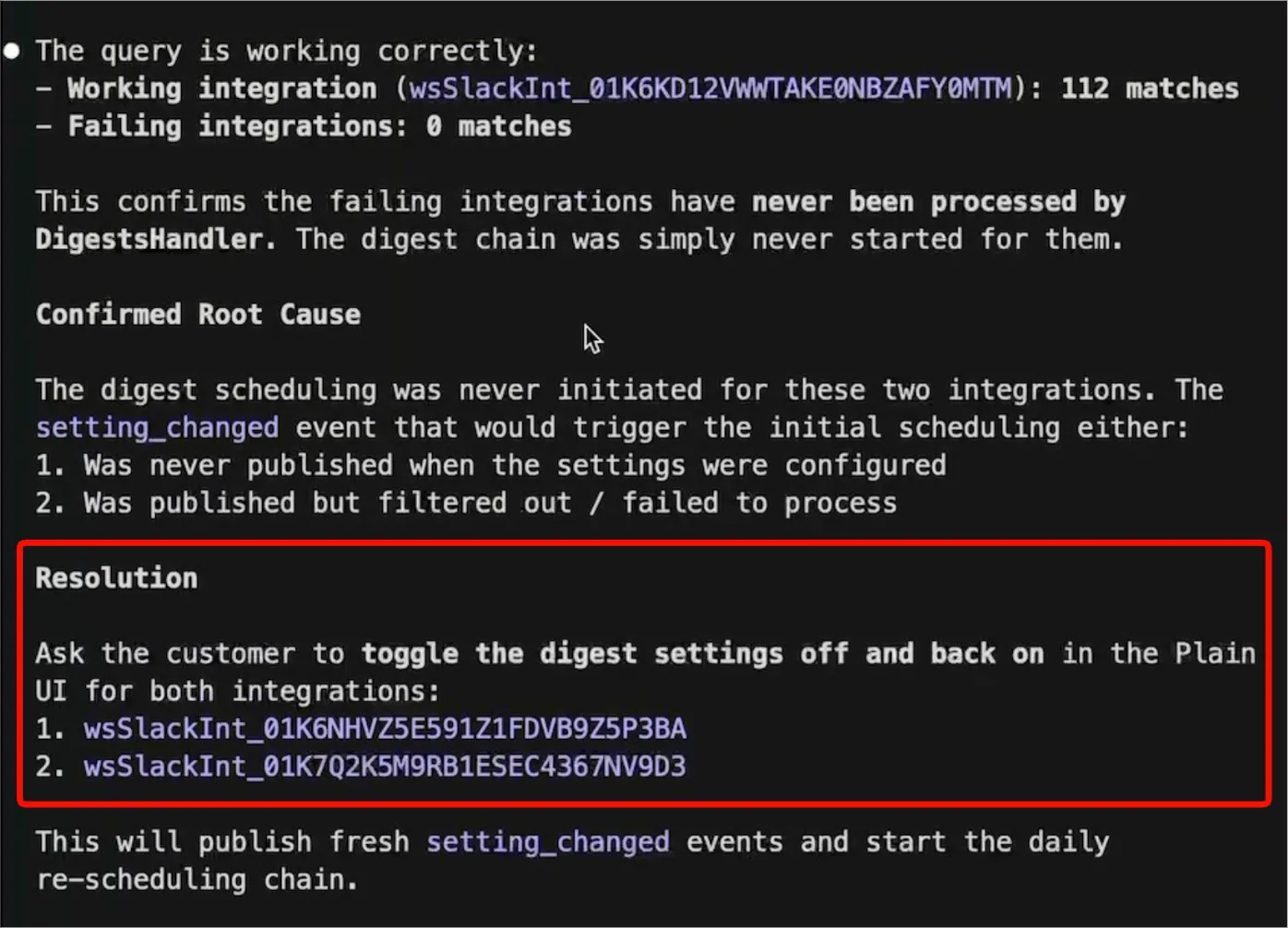

That took a few minutes but lo and behold, it managed to get to an answer!

Due to a weird quirk with how we were managing the trigger for these digests, I just had to tell the customer to toggle the setting on and off again!

That experience was quite illuminating. It was the first time I experienced Claude Code driving a complex L2 support request all by itself.

I started using this pattern more and more, I also shared a demo of what I had done to get others excited to try it out as well.

However, spreading the knowledge turned out to be surprisingly difficult!

Not everyone on the team is using Claude Code, some are using Cursor, others are using Codex. Some were understandably skeptical too, it’s natural to assume that LLMs can’t really tackle complex technical problems on their own. There was some “selling” I needed to do.

Finally, I couldn’t just share all the credentials involved with everyone easily. Some of the folks who wanted to use this weren’t even in Engineering, they were Sales who just wanted to help their accounts with onboarding for some of the quirkier parts of the product.

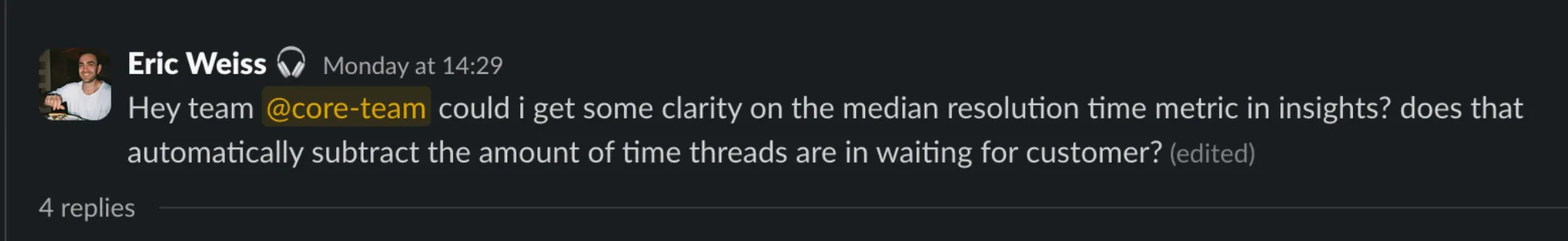

It would be very common for Sales to ping engineering for questions like that, where Claude Code with the right tooling can handle on its own.

So we started exploring solutions for this issue. One of the first things we tried was to connect a Cursor background agent to our codebases and let everyone on the team ask it questions.

That immediately lead to some relief but it wasn’t exactly fit for purpose.

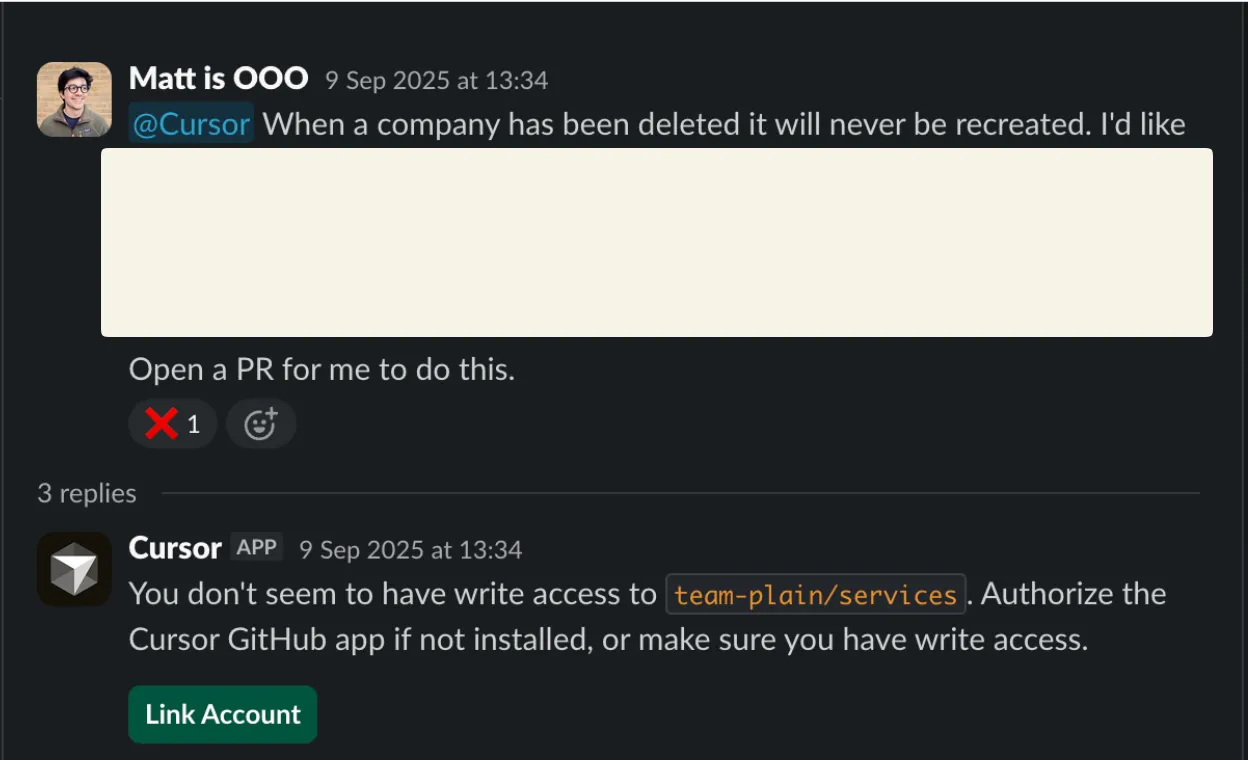

One of the first issues we encountered was that using this required everyone to get a paid seat. Not great, given that half the userbase doesn’t even have or need to use the Cursor app.

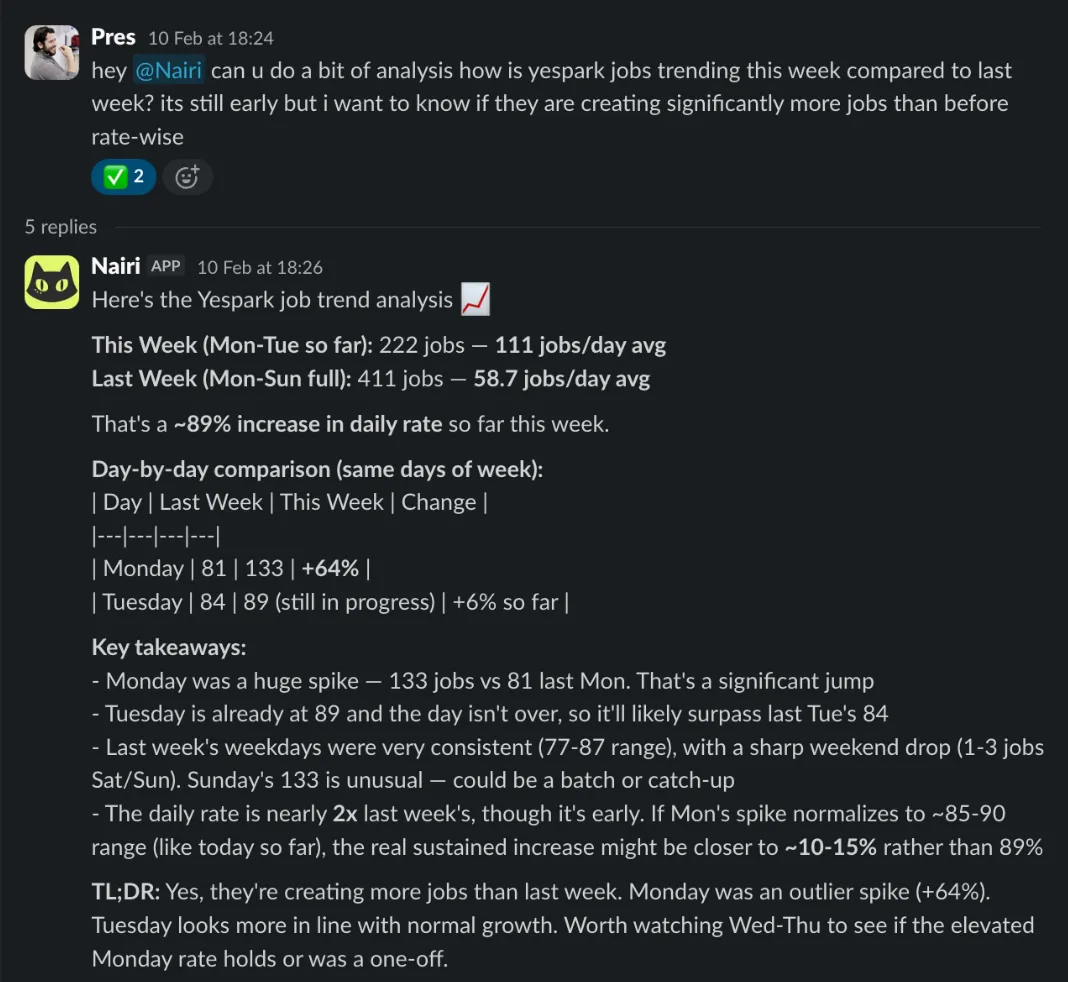

What’s more, quite frequently we’d see technical folks jump in on threads to help out:

In this example, someone was trying to debug an odd issue with our MS Teams integration. The agent didn’t exactly get it right, so Nico had to jump in to provide some context, given he built the integration.

However, you can’t really do that because that specific session was tied to someone else!

It was quite clear that there’s significant value in having an agent deployed for your whole team to use. Others are starting to notice this too.

I’ve been working on a couple of products to solve this issue in the past few months.

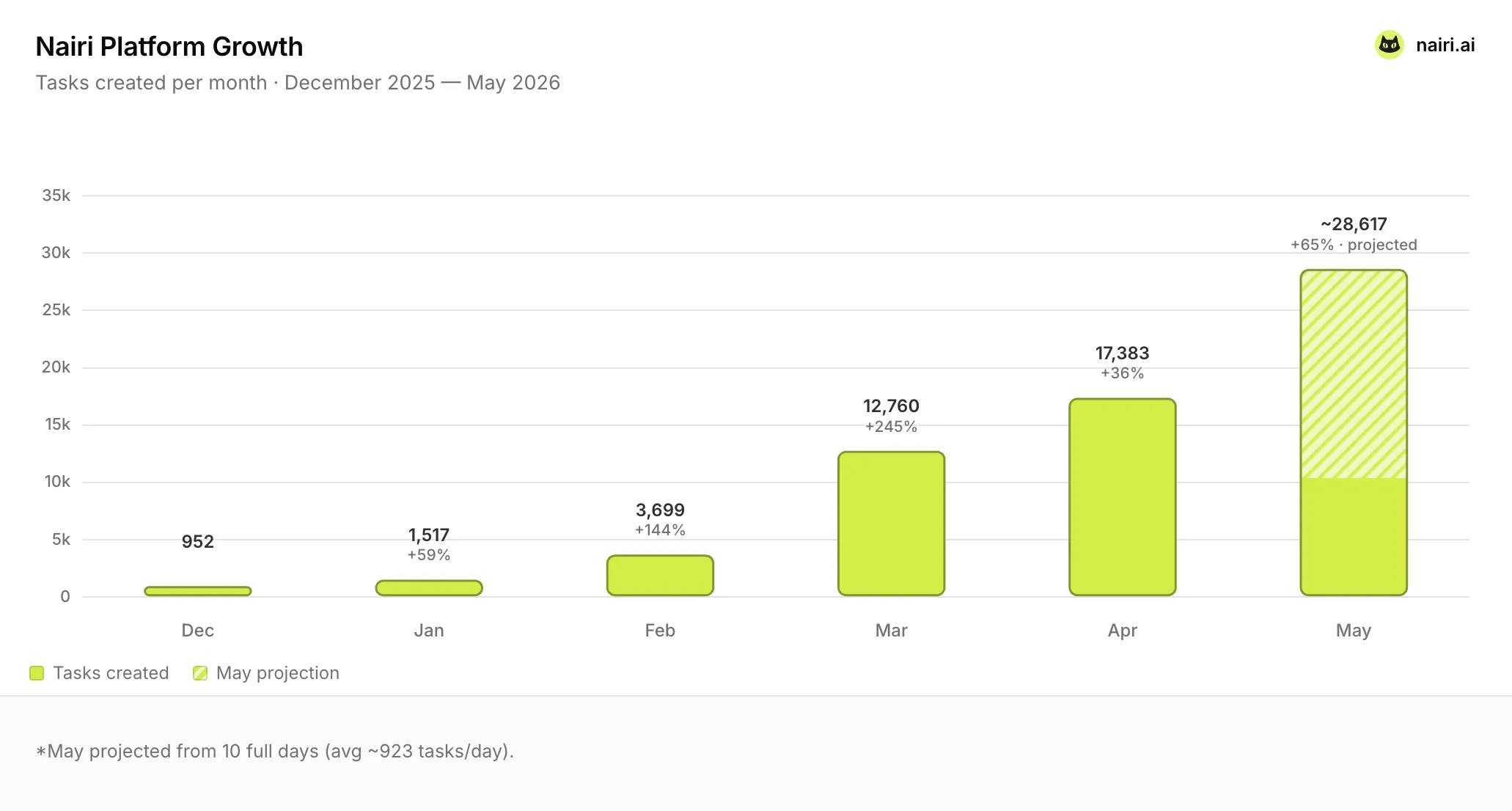

First off, I’ve been building Nairi which is an agent builder tool, where you can build & deploy agents in Slack without writing any code.

All agents are purposefully shared by default. You pay a single subscription, your whole team uses it.

Guillaume from Yespark, one of my first customers shared to me in a call once:

I just add Nairi to a slack channel, show how to use it and everyone immediately adopts it. It’s going viral!

The numbers are backing this up:

I compiled the findings from Nairi, shared it with my team over at Plain and we started building something in this direction too.

A shared agent with access to your support data and any external tools you connect (Notion, Linear, Datadog, etc) for your whole support engineering org to use.

It’s a first step in the direction of building Claude Code for support engineers. But unlike the vanilla version, we are building it to be shared & public by default.

We’ll announce something on this front soon.

Agents are meant to be shared, but today's tools are not fit for purpose.

Claude Code, with the right tools can help you 10x yourself. But sharing that will help you 10x your team.

Given the rapid pace of AI development, we can all benefit from sharing what we learn.

We need to get rid of the legacy seat-based pricing models and make sure AI tools are multiplayer by default.

That’s where my current focus is and I believe many others will follow.